AI aligned withthe planet.

The Earth Alignment Principle — ERI's framework for ensuring AI deployment serves both business performance and planetary boundaries. Responsible by design, not by afterthought.

The Earth Alignment Principle

AI must be aligned with planetary stability — not just human values.

In April 2025, ERI co-founder Owen Gaffney and colleagues published a landmark commentary in Nature Sustainability proposing the Earth Alignment Principle: AI development, deployment, and use must align with a goal of halving emissions every decade.

The paper's central concern is not AI's direct energy footprint — it is that AI is being embedded into the economic systems that have driven emissions upward for a century. Without deliberate alignment, AI accelerates those systems rather than transforming them.

Read the ERI summaryOwen Gaffney is a global sustainability analyst and co-founder of the Exponential Roadmap Initiative. He leads ERI's research on the intersection of AI and planetary boundaries, and is the lead author of the Earth Alignment Principle published in Nature Sustainability (2025).

Read the full commentary"There is much talk about the potential of AI to drive down greenhouse gas emissions. But the irony is that data centre emissions are rising rapidly, due to AI expansion, and, more worryingly, AI is being embedded in the very economic systems that have been driving up emissions for a century or more."

The Principle

AI business models must be aligned not only with human values, but with a goal of halving emissions every decade — consistent with planetary stability and the 1.5°C pathway.

AI as Climate Influencer

AI agents are becoming trusted advisors. Whose interests do they serve?

The AI Agents of Change white paper (ERI, Climate Collective & Spotify, October 2025) identifies four tipping points that determine whether AI agents drive deep emissions cuts or turbocharge unsustainable consumption. Household consumption accounts for more than 60% of global greenhouse gas emissions — making this one of the highest-leverage interventions available.

Default to sustainable

AI agents that surface low-carbon options first — in purchasing, travel, food, and finance — can make sustainable choices the path of least resistance rather than the deliberate exception.

Personalise the case

Generic sustainability messaging fails. AI that connects planetary impact to individual values and circumstances creates the emotional resonance that drives lasting behaviour change.

Shift market signals

When millions of AI-guided decisions consistently favour low-carbon options, the aggregate market signal changes — rewarding sustainable producers and penalising high-emission incumbents.

Embed in cultural norms

AI agents that normalise sustainable behaviour across social contexts — from workplace tools to consumer platforms — accelerate the cultural tipping point where low-carbon becomes the expected default.

ERI's Responsible AI Commitments

Principles we hold ourselves to — not just others.

The Earth Alignment Principle is not a policy position ERI advocates for externally. It governs how ERI designs its own Human-AI missions, selects use cases, and measures outcomes.

Earth-aligned missions

Every ERI agentic mission is scoped against a measurable climate outcome. We do not deploy AI capability for efficiency alone — the outcome must contribute to halving emissions every decade.

Science-based outcome standards

ERI's outcomes are measured against the Climate Solutions Framework — science-anchored criteria for what genuinely counts as a climate solution. AI-generated outputs are held to the same standard.

Transparent about risk

We acknowledge that AI embedded in existing economic systems can amplify the very dynamics driving emissions upward. ERI's operating model is designed to redirect that capability, not ignore the risk.

Grounded in peer-reviewed research

ERI's responsible AI position is not a policy statement — it is grounded in published research, including Gaffney et al. (2025) in Nature Sustainability and the Agents of Change white paper (2025).

The Science Basis

Alignment is not a values question — it is a risk management question.

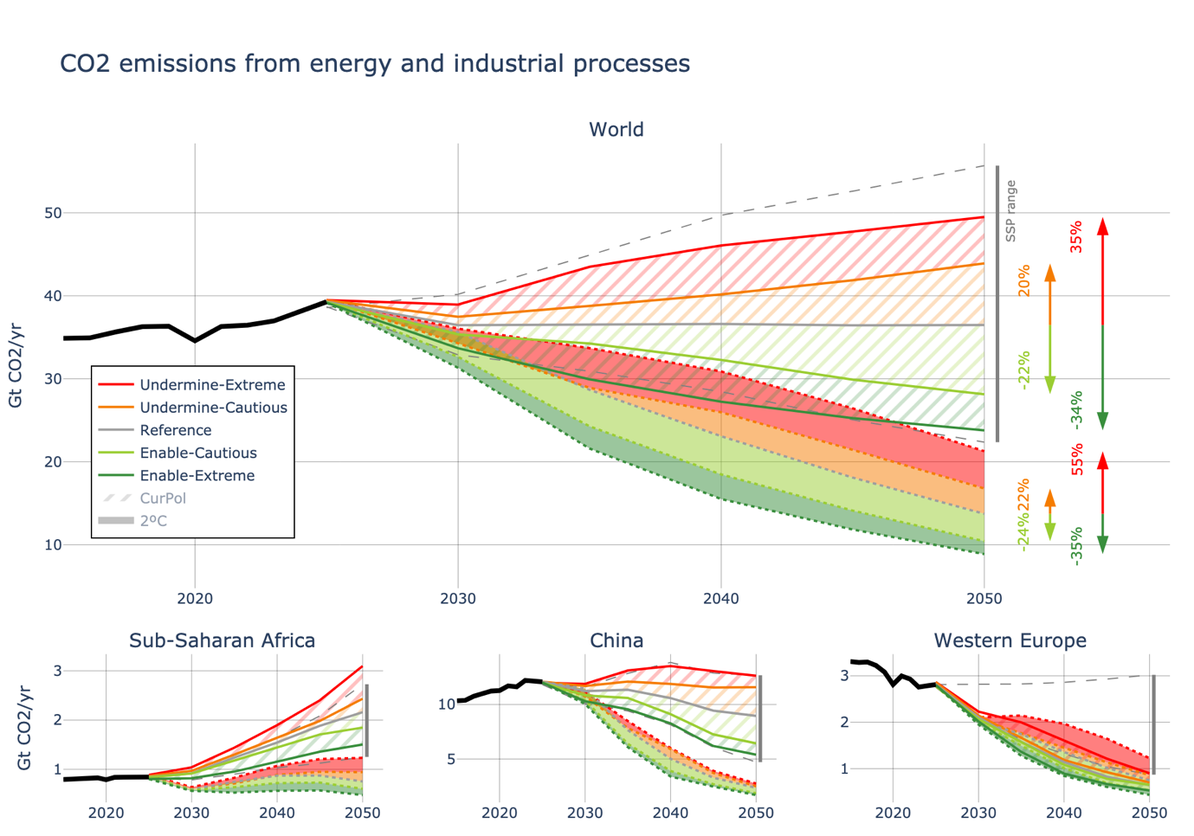

New modelling by Wilson C. et al. (2026, under review at Nature Portfolio) quantifies what is at stake. Using a global integrated framework across buildings, transport, industry, and energy systems to 2050, the researchers show that the alignment or misalignment of AI with climate goals is the single most consequential variable in determining whether the Paris Agreement remains achievable — comparable in magnitude to all other global demographic, economic, and technological uncertainties combined.

Energy investment saved

÷ 2

$75T → $35T with Earth-aligned AI + strong policy

Net-zero year (Enable-Extreme)

2047

16 years earlier than the reference scenario

Probability of staying below 2°C

82% → 69%

Enable-Extreme vs Undermine-Extreme — near the IPCC 'likely' threshold

Near-term AI assessments

Optimism biased

IEA and Stern et al. capture only the emission-reducing side; rebound and induced demand effects are excluded

What becomes possible

Earth-aligned AI at full maturity is not a constraint — it is the competitive advantage.

At AI Maturity Level 4, organisations do not just apply responsible AI principles — they operationalise them at scale. The Climate Solutions Framework runs autonomously. Greenwashing is detected in real time. Planetary boundaries become model parameters, not policy aspirations.